# Hyperparameter Search using Trainer API

🤗 Transformers provides a `Trainer` class optimized for training 🤗 Transformers models, making it easier to start training without manually writing your own training loop. The `Trainer` provides API for hyperparameter search. This doc shows how to enable it in example.

## Hyperparameter Search backend

`Trainer` supports four hyperparameter search backends currently:

[optuna](https://optuna.org/), [sigopt](https://sigopt.com/), [raytune](https://docs.ray.io/en/latest/tune/index.html) and [wandb](https://wandb.ai/site/sweeps).

you should install them before using them as the hyperparameter search backend

```bash

pip install optuna/sigopt/wandb/ray[tune]

```

## How to enable Hyperparameter search in example

Define the hyperparameter search space, different backends need different format.

For sigopt, see sigopt [object_parameter](https://docs.sigopt.com/ai-module-api-references/api_reference/objects/object_parameter), it's like following:

```py

>>> def sigopt_hp_space(trial):

... return [

... {"bounds": {"min": 1e-6, "max": 1e-4}, "name": "learning_rate", "type": "double"},

... {

... "categorical_values": ["16", "32", "64", "128"],

... "name": "per_device_train_batch_size",

... "type": "categorical",

... },

... ]

```

For optuna, see optuna [object_parameter](https://optuna.readthedocs.io/en/stable/tutorial/10_key_features/002_configurations.html#sphx-glr-tutorial-10-key-features-002-configurations-py), it's like following:

```py

>>> def optuna_hp_space(trial):

... return {

... "learning_rate": trial.suggest_float("learning_rate", 1e-6, 1e-4, log=True),

... "per_device_train_batch_size": trial.suggest_categorical("per_device_train_batch_size", [16, 32, 64, 128]),

... }

```

Optuna provides multi-objective HPO. You can pass `direction` in `hyperparameter_search` and define your own compute_objective to return multiple objective values. The Pareto Front (`List[BestRun]`) will be returned in hyperparameter_search, you should refer to the test case `TrainerHyperParameterMultiObjectOptunaIntegrationTest` in [test_trainer](https://github.com/huggingface/transformers/blob/main/tests/trainer/test_trainer.py). It's like following

```py

>>> best_trials = trainer.hyperparameter_search(

... direction=["minimize", "maximize"],

... backend="optuna",

... hp_space=optuna_hp_space,

... n_trials=20,

... compute_objective=compute_objective,

... )

```

For raytune, see raytune [object_parameter](https://docs.ray.io/en/latest/tune/api/search_space.html), it's like following:

```py

>>> def ray_hp_space(trial):

... return {

... "learning_rate": tune.loguniform(1e-6, 1e-4),

... "per_device_train_batch_size": tune.choice([16, 32, 64, 128]),

... }

```

For wandb, see wandb [object_parameter](https://docs.wandb.ai/guides/sweeps/configuration), it's like following:

```py

>>> def wandb_hp_space(trial):

... return {

... "method": "random",

... "metric": {"name": "objective", "goal": "minimize"},

... "parameters": {

... "learning_rate": {"distribution": "uniform", "min": 1e-6, "max": 1e-4},

... "per_device_train_batch_size": {"values": [16, 32, 64, 128]},

... },

... }

```

Define a `model_init` function and pass it to the `Trainer`, as an example:

```py

>>> def model_init(trial):

... return AutoModelForSequenceClassification.from_pretrained(

... model_args.model_name_or_path,

... from_tf=bool(".ckpt" in model_args.model_name_or_path),

... config=config,

... cache_dir=model_args.cache_dir,

... revision=model_args.model_revision,

... token=True if model_args.use_auth_token else None,

... )

```

Create a `Trainer` with your `model_init` function, training arguments, training and test datasets, and evaluation function:

```py

>>> trainer = Trainer(

... model=None,

... args=training_args,

... train_dataset=small_train_dataset,

... eval_dataset=small_eval_dataset,

... compute_metrics=compute_metrics,

... processing_class=tokenizer,

... model_init=model_init,

... data_collator=data_collator,

... )

```

Call hyperparameter search, get the best trial parameters, backend could be `"optuna"`/`"sigopt"`/`"wandb"`/`"ray"`. direction can be`"minimize"` or `"maximize"`, which indicates whether to optimize greater or lower objective.

You could define your own compute_objective function, if not defined, the default compute_objective will be called, and the sum of eval metric like f1 is returned as objective value.

```py

>>> best_trial = trainer.hyperparameter_search(

... direction="maximize",

... backend="optuna",

... hp_space=optuna_hp_space,

... n_trials=20,

... compute_objective=compute_objective,

... )

```

## Hyperparameter search For DDP finetune

Currently, Hyperparameter search for DDP is enabled for optuna and sigopt. Only the rank-zero process will generate the search trial and pass the argument to other ranks.

# Fully Sharded Data Parallel

[Fully Sharded Data Parallel (FSDP)](https://pytorch.org/blog/introducing-pytorch-fully-sharded-data-parallel-api/) is a data parallel method that shards a model's parameters, gradients and optimizer states across the number of available GPUs (also called workers or *rank*). Unlike [DistributedDataParallel (DDP)](https://pytorch.org/docs/stable/generated/torch.nn.parallel.DistributedDataParallel.html), FSDP reduces memory-usage because a model is replicated on each GPU. This improves GPU memory-efficiency and allows you to train much larger models on fewer GPUs. FSDP is integrated with the Accelerate, a library for easily managing training in distributed environments, which means it is available for use from the `Trainer` class.

Before you start, make sure Accelerate is installed and at least PyTorch 2.1.0 or newer.

```bash

pip install accelerate

```

## FSDP configuration

To start, run the [`accelerate config`](https://huggingface.co/docs/accelerate/package_reference/cli#accelerate-config) command to create a configuration file for your training environment. Accelerate uses this configuration file to automatically setup the correct training environment based on your selected training options in `accelerate config`.

```bash

accelerate config

```

When you run `accelerate config`, you'll be prompted with a series of options to configure your training environment. This section covers some of the most important FSDP options. To learn more about the other available FSDP options, take a look at the [fsdp_config](https://huggingface.co/docs/transformers/main_classes/trainer#transformers.TrainingArguments.fsdp_config) parameters.

### Sharding strategy

FSDP offers a number of sharding strategies to select from:

* `FULL_SHARD` - shards model parameters, gradients and optimizer states across workers; select `1` for this option

* `SHARD_GRAD_OP`- shard gradients and optimizer states across workers; select `2` for this option

* `NO_SHARD` - don't shard anything (this is equivalent to DDP); select `3` for this option

* `HYBRID_SHARD` - shard model parameters, gradients and optimizer states within each worker where each worker also has a full copy; select `4` for this option

* `HYBRID_SHARD_ZERO2` - shard gradients and optimizer states within each worker where each worker also has a full copy; select `5` for this option

This is enabled by the `fsdp_sharding_strategy` flag.

### CPU offload

You could also offload parameters and gradients when they are not in use to the CPU to save even more GPU memory and help you fit large models where even FSDP may not be sufficient. This is enabled by setting `fsdp_offload_params: true` when running `accelerate config`.

### Wrapping policy

FSDP is applied by wrapping each layer in the network. The wrapping is usually applied in a nested way where the full weights are discarded after each forward pass to save memory for use in the next layer. The *auto wrapping* policy is the simplest way to implement this and you don't need to change any code. You should select `fsdp_auto_wrap_policy: TRANSFORMER_BASED_WRAP` to wrap a Transformer layer and `fsdp_transformer_layer_cls_to_wrap` to specify which layer to wrap (for example `BertLayer`).

Otherwise, you can choose a size-based wrapping policy where FSDP is applied to a layer if it exceeds a certain number of parameters. This is enabled by setting `fsdp_wrap_policy: SIZE_BASED_WRAP` and `min_num_param` to the desired size threshold.

### Checkpointing

Intermediate checkpoints should be saved with `fsdp_state_dict_type: SHARDED_STATE_DICT` because saving the full state dict with CPU offloading on rank 0 takes a lot of time and often results in `NCCL Timeout` errors due to indefinite hanging during broadcasting. You can resume training with the sharded state dicts with the [load_state](https://huggingface.co/docs/accelerate/main/en/package_reference/accelerator#accelerate.Accelerator.load_state)` method.

```py

# directory containing checkpoints

accelerator.load_state("ckpt")

```

However, when training ends, you want to save the full state dict because sharded state dict is only compatible with FSDP.

```py

if trainer.is_fsdp_enabled:

trainer.accelerator.state.fsdp_plugin.set_state_dict_type("FULL_STATE_DICT")

trainer.save_model(script_args.output_dir)

```

### TPU

[PyTorch XLA](https://pytorch.org/xla/release/2.1/index.html) supports FSDP training for TPUs and it can be enabled by modifying the FSDP configuration file generated by `accelerate config`. In addition to the sharding strategies and wrapping options specified above, you can add the parameters shown below to the file.

```yaml

xla: True # must be set to True to enable PyTorch/XLA

xla_fsdp_settings: # XLA-specific FSDP parameters

xla_fsdp_grad_ckpt: True # use gradient checkpointing

```

The [`xla_fsdp_settings`](https://github.com/pytorch/xla/blob/2e6e183e0724818f137c8135b34ef273dea33318/torch_xla/distributed/fsdp/xla_fully_sharded_data_parallel.py#L128) allow you to configure additional XLA-specific parameters for FSDP.

## Launch training

An example FSDP configuration file may look like:

```yaml

compute_environment: LOCAL_MACHINE

debug: false

distributed_type: FSDP

downcast_bf16: 'no'

fsdp_config:

fsdp_auto_wrap_policy: TRANSFORMER_BASED_WRAP

fsdp_backward_prefetch_policy: BACKWARD_PRE

fsdp_cpu_ram_efficient_loading: true

fsdp_forward_prefetch: false

fsdp_offload_params: true

fsdp_sharding_strategy: 1

fsdp_state_dict_type: SHARDED_STATE_DICT

fsdp_sync_module_states: true

fsdp_transformer_layer_cls_to_wrap: BertLayer

fsdp_use_orig_params: true

machine_rank: 0

main_training_function: main

mixed_precision: bf16

num_machines: 1

num_processes: 2

rdzv_backend: static

same_network: true

tpu_env: []

tpu_use_cluster: false

tpu_use_sudo: false

use_cpu: false

```

To launch training, run the [`accelerate launch`](https://huggingface.co/docs/accelerate/package_reference/cli#accelerate-launch) command and it'll automatically use the configuration file you previously created with `accelerate config`.

```bash

accelerate launch my-trainer-script.py

```

```bash

accelerate launch --fsdp="full shard" --fsdp_config="path/to/fsdp_config/ my-trainer-script.py

```

## Next steps

FSDP can be a powerful tool for training really large models and you have access to more than one GPU or TPU. By sharding the model parameters, optimizer and gradient states, and even offloading them to the CPU when they're inactive, FSDP can reduce the high cost of large-scale training. If you're interested in learning more, the following may be helpful:

* Follow along with the more in-depth Accelerate guide for [FSDP](https://huggingface.co/docs/accelerate/usage_guides/fsdp).

* Read the [Introducing PyTorch Fully Sharded Data Parallel (FSDP) API](https://pytorch.org/blog/introducing-pytorch-fully-sharded-data-parallel-api/) blog post.

* Read the [Scaling PyTorch models on Cloud TPUs with FSDP](https://pytorch.org/blog/scaling-pytorch-models-on-cloud-tpus-with-fsdp/) blog post.

# Perplexity of fixed-length models

Perplexity (PPL) is one of the most common metrics for evaluating language models. Before diving in, we should note

that the metric applies specifically to classical language models (sometimes called autoregressive or causal language

models) and is not well defined for masked language models like BERT (see [summary of the models](model_summary)).

Perplexity is defined as the exponentiated average negative log-likelihood of a sequence. If we have a tokenized

sequence \\(X = (x_0, x_1, \dots, x_t)\\), then the perplexity of \\(X\\) is,

$$\text{PPL}(X) = \exp \left\{ {-\frac{1}{t}\sum_i^t \log p_\theta (x_i|x_{

When working with approximate models, however, we typically have a constraint on the number of tokens the model can

process. The largest version of [GPT-2](model_doc/gpt2), for example, has a fixed length of 1024 tokens, so we

cannot calculate \\(p_\theta(x_t|x_{

This is quick to compute since the perplexity of each segment can be computed in one forward pass, but serves as a poor

approximation of the fully-factorized perplexity and will typically yield a higher (worse) PPL because the model will

have less context at most of the prediction steps.

Instead, the PPL of fixed-length models should be evaluated with a sliding-window strategy. This involves repeatedly

sliding the context window so that the model has more context when making each prediction.

This is a closer approximation to the true decomposition of the sequence probability and will typically yield a more

favorable score. The downside is that it requires a separate forward pass for each token in the corpus. A good

practical compromise is to employ a strided sliding window, moving the context by larger strides rather than sliding by

1 token a time. This allows computation to proceed much faster while still giving the model a large context to make

predictions at each step.

## Example: Calculating perplexity with GPT-2 in 🤗 Transformers

Let's demonstrate this process with GPT-2.

```python

from transformers import GPT2LMHeadModel, GPT2TokenizerFast

device = "cuda"

model_id = "openai-community/gpt2-large"

model = GPT2LMHeadModel.from_pretrained(model_id).to(device)

tokenizer = GPT2TokenizerFast.from_pretrained(model_id)

```

We'll load in the WikiText-2 dataset and evaluate the perplexity using a few different sliding-window strategies. Since

this dataset is small and we're just doing one forward pass over the set, we can just load and encode the entire

dataset in memory.

```python

from datasets import load_dataset

test = load_dataset("wikitext", "wikitext-2-raw-v1", split="test")

encodings = tokenizer("\n\n".join(test["text"]), return_tensors="pt")

```

With 🤗 Transformers, we can simply pass the `input_ids` as the `labels` to our model, and the average negative

log-likelihood for each token is returned as the loss. With our sliding window approach, however, there is overlap in

the tokens we pass to the model at each iteration. We don't want the log-likelihood for the tokens we're just treating

as context to be included in our loss, so we can set these targets to `-100` so that they are ignored. The following

is an example of how we could do this with a stride of `512`. This means that the model will have at least 512 tokens

for context when calculating the conditional likelihood of any one token (provided there are 512 preceding tokens

available to condition on).

```python

import torch

from tqdm import tqdm

max_length = model.config.n_positions

stride = 512

seq_len = encodings.input_ids.size(1)

nll_sum = 0.0

n_tokens = 0

prev_end_loc = 0

for begin_loc in tqdm(range(0, seq_len, stride)):

end_loc = min(begin_loc + max_length, seq_len)

trg_len = end_loc - prev_end_loc # may be different from stride on last loop

input_ids = encodings.input_ids[:, begin_loc:end_loc].to(device)

target_ids = input_ids.clone()

target_ids[:, :-trg_len] = -100

with torch.no_grad():

outputs = model(input_ids, labels=target_ids)

# loss is calculated using CrossEntropyLoss which averages over valid labels

# N.B. the model only calculates loss over trg_len - 1 labels, because it internally shifts the labels

# to the left by 1.

neg_log_likelihood = outputs.loss

# Accumulate the total negative log-likelihood and the total number of tokens

num_valid_tokens = (target_ids != -100).sum().item() # number of valid tokens in target_ids

batch_size = target_ids.size(0)

num_loss_tokens = num_valid_tokens - batch_size # subtract batch_size due to internal label shift

nll_sum += neg_log_likelihood * num_loss_tokens

n_tokens += num_loss_tokens

prev_end_loc = end_loc

if end_loc == seq_len:

break

avg_nll = nll_sum / n_tokens # average negative log-likelihood per token

ppl = torch.exp(avg_nll)

```

Running this with the stride length equal to the max input length is equivalent to the suboptimal, non-sliding-window

strategy we discussed above. The smaller the stride, the more context the model will have in making each prediction,

and the better the reported perplexity will typically be.

When we run the above with `stride = 1024`, i.e. no overlap, the resulting PPL is `19.44`, which is about the same

as the `19.93` reported in the GPT-2 paper. By using `stride = 512` and thereby employing our striding window

strategy, this jumps down to `16.44`. This is not only a more favorable score, but is calculated in a way that is

closer to the true autoregressive decomposition of a sequence likelihood.

# Efficient Training on Multiple CPUs

When training on a single CPU is too slow, we can use multiple CPUs. This guide focuses on PyTorch-based DDP enabling

distributed CPU training efficiently on [bare metal](#usage-in-trainer) and [Kubernetes](#usage-with-kubernetes).

## Intel® oneCCL Bindings for PyTorch

[Intel® oneCCL](https://github.com/oneapi-src/oneCCL) (collective communications library) is a library for efficient distributed deep learning training implementing such collectives like allreduce, allgather, alltoall. For more information on oneCCL, please refer to the [oneCCL documentation](https://spec.oneapi.com/versions/latest/elements/oneCCL/source/index.html) and [oneCCL specification](https://spec.oneapi.com/versions/latest/elements/oneCCL/source/index.html).

Module `oneccl_bindings_for_pytorch` (`torch_ccl` before version 1.12) implements PyTorch C10D ProcessGroup API and can be dynamically loaded as external ProcessGroup and only works on Linux platform now

Check more detailed information for [oneccl_bind_pt](https://github.com/intel/torch-ccl).

### Intel® oneCCL Bindings for PyTorch installation

Wheel files are available for the following Python versions:

| Extension Version | Python 3.7 | Python 3.8 | Python 3.9 | Python 3.10 | Python 3.11 |

| :---------------: | :--------: | :--------: | :--------: | :---------: | :---------: |

| 2.5.0 | | √ | √ | √ | √ |

| 2.4.0 | | √ | √ | √ | √ |

| 2.3.0 | | √ | √ | √ | √ |

| 2.2.0 | | √ | √ | √ | √ |

Please run `pip list | grep torch` to get your `pytorch_version`.

```bash

pip install oneccl_bind_pt=={pytorch_version} -f https://developer.intel.com/ipex-whl-stable-cpu

```

where `{pytorch_version}` should be your PyTorch version, for instance 2.4.0.

Check more approaches for [oneccl_bind_pt installation](https://github.com/intel/torch-ccl).

Versions of oneCCL and PyTorch must match.

## Intel® MPI library

Use this standards-based MPI implementation to deliver flexible, efficient, scalable cluster messaging on Intel® architecture. This component is part of the Intel® oneAPI HPC Toolkit.

oneccl_bindings_for_pytorch is installed along with the MPI tool set. Need to source the environment before using it.

```bash

oneccl_bindings_for_pytorch_path=$(python -c "from oneccl_bindings_for_pytorch import cwd; print(cwd)")

source $oneccl_bindings_for_pytorch_path/env/setvars.sh

```

#### Intel® Extension for PyTorch installation

Intel Extension for PyTorch (IPEX) provides performance optimizations for CPU training with both Float32 and BFloat16 (refer to the [single CPU section](./perf_train_cpu) to learn more).

The following "Usage in Trainer" takes mpirun in Intel® MPI library as an example.

## Usage in Trainer

To enable multi CPU distributed training in the Trainer with the ccl backend, users should add **`--ddp_backend ccl`** in the command arguments.

Let's see an example with the [question-answering example](https://github.com/huggingface/transformers/tree/main/examples/pytorch/question-answering)

The following command enables training with 2 processes on one Xeon node, with one process running per one socket. The variables OMP_NUM_THREADS/CCL_WORKER_COUNT can be tuned for optimal performance.

```shell script

export CCL_WORKER_COUNT=1

export MASTER_ADDR=127.0.0.1

mpirun -n 2 -genv OMP_NUM_THREADS=23 \

python3 run_qa.py \

--model_name_or_path google-bert/bert-large-uncased \

--dataset_name squad \

--do_train \

--do_eval \

--per_device_train_batch_size 12 \

--learning_rate 3e-5 \

--num_train_epochs 2 \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir /tmp/debug_squad/ \

--no_cuda \

--ddp_backend ccl \

--use_ipex

```

The following command enables training with a total of four processes on two Xeons (node0 and node1, taking node0 as the main process), ppn (processes per node) is set to 2, with one process running per one socket. The variables OMP_NUM_THREADS/CCL_WORKER_COUNT can be tuned for optimal performance.

In node0, you need to create a configuration file which contains the IP addresses of each node (for example hostfile) and pass that configuration file path as an argument.

```shell script

cat hostfile

xxx.xxx.xxx.xxx #node0 ip

xxx.xxx.xxx.xxx #node1 ip

```

Now, run the following command in node0 and **4DDP** will be enabled in node0 and node1 with BF16 auto mixed precision:

```shell script

export CCL_WORKER_COUNT=1

export MASTER_ADDR=xxx.xxx.xxx.xxx #node0 ip

mpirun -f hostfile -n 4 -ppn 2 \

-genv OMP_NUM_THREADS=23 \

python3 run_qa.py \

--model_name_or_path google-bert/bert-large-uncased \

--dataset_name squad \

--do_train \

--do_eval \

--per_device_train_batch_size 12 \

--learning_rate 3e-5 \

--num_train_epochs 2 \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir /tmp/debug_squad/ \

--no_cuda \

--ddp_backend ccl \

--use_ipex \

--bf16

```

## Usage with Kubernetes

The same distributed training job from the previous section can be deployed to a Kubernetes cluster using the

[Kubeflow PyTorchJob training operator](https://www.kubeflow.org/docs/components/training/user-guides/pytorch).

### Setup

This example assumes that you have:

* Access to a Kubernetes cluster with [Kubeflow installed](https://www.kubeflow.org/docs/started/installing-kubeflow)

* [`kubectl`](https://kubernetes.io/docs/tasks/tools) installed and configured to access the Kubernetes cluster

* A [Persistent Volume Claim (PVC)](https://kubernetes.io/docs/concepts/storage/persistent-volumes) that can be used

to store datasets and model files. There are multiple options for setting up the PVC including using an NFS

[storage class](https://kubernetes.io/docs/concepts/storage/storage-classes) or a cloud storage bucket.

* A Docker container that includes your model training script and all the dependencies needed to run the script. For

distributed CPU training jobs, this typically includes PyTorch, Transformers, Intel Extension for PyTorch, Intel

oneCCL Bindings for PyTorch, and OpenSSH to communicate between the containers.

The snippet below is an example of a Dockerfile that uses a base image that supports distributed CPU training and then

extracts a Transformers release to the `/workspace` directory, so that the example scripts are included in the image:

```dockerfile

FROM intel/intel-optimized-pytorch:2.4.0-pip-multinode

RUN apt-get update -y && \

apt-get install -y --no-install-recommends --fix-missing \

google-perftools \

libomp-dev

WORKDIR /workspace

# Download and extract the transformers code

ARG HF_TRANSFORMERS_VER="4.46.0"

RUN pip install --no-cache-dir \

transformers==${HF_TRANSFORMERS_VER} && \

mkdir transformers && \

curl -sSL --retry 5 https://github.com/huggingface/transformers/archive/refs/tags/v${HF_TRANSFORMERS_VER}.tar.gz | tar -C transformers --strip-components=1 -xzf -

```

The image needs to be built and copied to the cluster's nodes or pushed to a container registry prior to deploying the

PyTorchJob to the cluster.

### PyTorchJob Specification File

The [Kubeflow PyTorchJob](https://www.kubeflow.org/docs/components/training/user-guides/pytorch) is used to run the distributed

training job on the cluster. The yaml file for the PyTorchJob defines parameters such as:

* The name of the PyTorchJob

* The number of replicas (workers)

* The python script and it's parameters that will be used to run the training job

* The types of resources (node selector, memory, and CPU) needed for each worker

* The image/tag for the Docker container to use

* Environment variables

* A volume mount for the PVC

The volume mount defines a path where the PVC will be mounted in the container for each worker pod. This location can be

used for the dataset, checkpoint files, and the saved model after training completes.

The snippet below is an example of a yaml file for a PyTorchJob with 4 workers running the

[question-answering example](https://github.com/huggingface/transformers/tree/main/examples/pytorch/question-answering).

```yaml

apiVersion: "kubeflow.org/v1"

kind: PyTorchJob

metadata:

name: transformers-pytorchjob

spec:

elasticPolicy:

rdzvBackend: c10d

minReplicas: 1

maxReplicas: 4

maxRestarts: 10

pytorchReplicaSpecs:

Worker:

replicas: 4 # The number of worker pods

restartPolicy: OnFailure

template:

spec:

containers:

- name: pytorch

image: : # Specify the docker image to use for the worker pods

imagePullPolicy: IfNotPresent

command: ["/bin/bash", "-c"]

args:

- >-

cd /workspace/transformers;

pip install -r /workspace/transformers/examples/pytorch/question-answering/requirements.txt;

source /usr/local/lib/python3.10/dist-packages/oneccl_bindings_for_pytorch/env/setvars.sh;

torchrun /workspace/transformers/examples/pytorch/question-answering/run_qa.py \

--model_name_or_path distilbert/distilbert-base-uncased \

--dataset_name squad \

--do_train \

--do_eval \

--per_device_train_batch_size 12 \

--learning_rate 3e-5 \

--num_train_epochs 2 \

--max_seq_length 384 \

--doc_stride 128 \

--output_dir /tmp/pvc-mount/output_$(date +%Y%m%d_%H%M%S) \

--no_cuda \

--ddp_backend ccl \

--bf16 \

--use_ipex;

env:

- name: LD_PRELOAD

value: "/usr/lib/x86_64-linux-gnu/libtcmalloc.so.4.5.9:/usr/local/lib/libiomp5.so"

- name: TRANSFORMERS_CACHE

value: "/tmp/pvc-mount/transformers_cache"

- name: HF_DATASETS_CACHE

value: "/tmp/pvc-mount/hf_datasets_cache"

- name: LOGLEVEL

value: "INFO"

- name: CCL_WORKER_COUNT

value: "1"

- name: OMP_NUM_THREADS # Can be tuned for optimal performance

value: "240"

resources:

limits:

cpu: 240 # Update the CPU and memory limit values based on your nodes

memory: 128Gi

requests:

cpu: 240 # Update the CPU and memory request values based on your nodes

memory: 128Gi

volumeMounts:

- name: pvc-volume

mountPath: /tmp/pvc-mount

- mountPath: /dev/shm

name: dshm

restartPolicy: Never

nodeSelector: # Optionally use nodeSelector to match a certain node label for the worker pods

node-type: gnr

volumes:

- name: pvc-volume

persistentVolumeClaim:

claimName: transformers-pvc

- name: dshm

emptyDir:

medium: Memory

```

To run this example, update the yaml based on your training script and the nodes in your cluster.

The CPU resource limits/requests in the yaml are defined in

[cpu units](https://kubernetes.io/docs/concepts/configuration/manage-resources-containers/#meaning-of-cpu)

where 1 CPU unit is equivalent to 1 physical CPU core or 1 virtual core (depending on whether the node is a physical

host or a VM). The amount of CPU and memory limits/requests defined in the yaml should be less than the amount of

available CPU/memory capacity on a single machine. It is usually a good idea to not use the entire machine's capacity in

order to leave some resources for the kubelet and OS. In order to get ["guaranteed"](https://kubernetes.io/docs/concepts/workloads/pods/pod-qos/#guaranteed)

[quality of service](https://kubernetes.io/docs/tasks/configure-pod-container/quality-service-pod) for the worker pods,

set the same CPU and memory amounts for both the resource limits and requests.

### Deploy

After the PyTorchJob spec has been updated with values appropriate for your cluster and training job, it can be deployed

to the cluster using:

```bash

export NAMESPACE=

kubectl create -f pytorchjob.yaml -n ${NAMESPACE}

```

The `kubectl get pods -n ${NAMESPACE}` command can then be used to list the pods in your namespace. You should see

the worker pods for the PyTorchJob that was just deployed. At first, they will probably have a status of "Pending" as

the containers get pulled and created, then the status should change to "Running".

```

NAME READY STATUS RESTARTS AGE

...

transformers-pytorchjob-worker-0 1/1 Running 0 7m37s

transformers-pytorchjob-worker-1 1/1 Running 0 7m37s

transformers-pytorchjob-worker-2 1/1 Running 0 7m37s

transformers-pytorchjob-worker-3 1/1 Running 0 7m37s

...

```

The logs for worker can be viewed using `kubectl logs -n ${NAMESPACE}`. Add `-f` to stream the logs, for example:

```bash

kubectl logs transformers-pytorchjob-worker-0 -n ${NAMESPACE} -f

```

After the training job completes, the trained model can be copied from the PVC or storage location. When you are done

with the job, the PyTorchJob resource can be deleted from the cluster using `kubectl delete -f pytorchjob.yaml -n ${NAMESPACE}`.

## Summary

This guide covered running distributed PyTorch training jobs using multiple CPUs on bare metal and on a Kubernetes

cluster. Both cases utilize Intel Extension for PyTorch and Intel oneCCL Bindings for PyTorch for optimal training

performance, and can be used as a template to run your own workload on multiple nodes.

# GPU inference

GPUs are the standard choice of hardware for machine learning, unlike CPUs, because they are optimized for memory bandwidth and parallelism. To keep up with the larger sizes of modern models or to run these large models on existing and older hardware, there are several optimizations you can use to speed up GPU inference. In this guide, you'll learn how to use FlashAttention-2 (a more memory-efficient attention mechanism), BetterTransformer (a PyTorch native fastpath execution), and bitsandbytes to quantize your model to a lower precision. Finally, learn how to use 🤗 Optimum to accelerate inference with ONNX Runtime on Nvidia and AMD GPUs.

The majority of the optimizations described here also apply to multi-GPU setups!

## FlashAttention-2

FlashAttention-2 is experimental and may change considerably in future versions.

[FlashAttention-2](https://huggingface.co/papers/2205.14135) is a faster and more efficient implementation of the standard attention mechanism that can significantly speedup inference by:

1. additionally parallelizing the attention computation over sequence length

2. partitioning the work between GPU threads to reduce communication and shared memory reads/writes between them

FlashAttention-2 is currently supported for the following architectures:

* [Bark](https://huggingface.co/docs/transformers/model_doc/bark#transformers.BarkModel)

* [Bart](https://huggingface.co/docs/transformers/model_doc/bart#transformers.BartModel)

* [Chameleon](https://huggingface.co/docs/transformers/model_doc/chameleon#transformers.Chameleon)

* [CLIP](https://huggingface.co/docs/transformers/model_doc/clip#transformers.CLIPModel)

* [Cohere](https://huggingface.co/docs/transformers/model_doc/cohere#transformers.CohereModel)

* [GLM](https://huggingface.co/docs/transformers/model_doc/glm#transformers.GLMModel)

* [Dbrx](https://huggingface.co/docs/transformers/model_doc/dbrx#transformers.DbrxModel)

* [DistilBert](https://huggingface.co/docs/transformers/model_doc/distilbert#transformers.DistilBertModel)

* [Gemma](https://huggingface.co/docs/transformers/model_doc/gemma#transformers.GemmaModel)

* [Gemma2](https://huggingface.co/docs/transformers/model_doc/gemma2#transformers.Gemma2Model)

* [GPT2](https://huggingface.co/docs/transformers/model_doc/gpt2)

* [GPTBigCode](https://huggingface.co/docs/transformers/model_doc/gpt_bigcode#transformers.GPTBigCodeModel)

* [GPTNeo](https://huggingface.co/docs/transformers/model_doc/gpt_neo#transformers.GPTNeoModel)

* [GPTNeoX](https://huggingface.co/docs/transformers/model_doc/gpt_neox#transformers.GPTNeoXModel)

* [GPT-J](https://huggingface.co/docs/transformers/model_doc/gptj#transformers.GPTJModel)

* [Granite](https://huggingface.co/docs/transformers/model_doc/granite#transformers.GraniteModel)

* [GraniteMoe](https://huggingface.co/docs/transformers/model_doc/granitemoe#transformers.GraniteMoeModel)

* [Idefics2](https://huggingface.co/docs/transformers/model_doc/idefics2#transformers.Idefics2Model)

* [Idefics3](https://huggingface.co/docs/transformers/model_doc/idefics3#transformers.Idefics3Model)

* [Falcon](https://huggingface.co/docs/transformers/model_doc/falcon#transformers.FalconModel)

* [JetMoe](https://huggingface.co/docs/transformers/model_doc/jetmoe#transformers.JetMoeModel)

* [Jamba](https://huggingface.co/docs/transformers/model_doc/jamba#transformers.JambaModel)

* [Llama](https://huggingface.co/docs/transformers/model_doc/llama#transformers.LlamaModel)

* [Llava](https://huggingface.co/docs/transformers/model_doc/llava)

* [Llava-NeXT](https://huggingface.co/docs/transformers/model_doc/llava_next)

* [Llava-NeXT-Video](https://huggingface.co/docs/transformers/model_doc/llava_next_video)

* [LLaVA-Onevision](https://huggingface.co/docs/transformers/model_doc/llava_onevision)

* [Mimi](https://huggingface.co/docs/transformers/model_doc/mimi)

* [VipLlava](https://huggingface.co/docs/transformers/model_doc/vipllava)

* [VideoLlava](https://huggingface.co/docs/transformers/model_doc/video_llava)

* [M2M100](https://huggingface.co/docs/transformers/model_doc/m2m_100)

* [MBart](https://huggingface.co/docs/transformers/model_doc/mbart#transformers.MBartModel)

* [Mistral](https://huggingface.co/docs/transformers/model_doc/mistral#transformers.MistralModel)

* [Mixtral](https://huggingface.co/docs/transformers/model_doc/mixtral#transformers.MixtralModel)

* [Moshi](https://huggingface.co/docs/transformers/model_doc/moshi#transformers.MoshiModel)

* [Musicgen](https://huggingface.co/docs/transformers/model_doc/musicgen#transformers.MusicgenModel)

* [MusicGen Melody](https://huggingface.co/docs/transformers/model_doc/musicgen_melody#transformers.MusicgenMelodyModel)

* [Nemotron](https://huggingface.co/docs/transformers/model_doc/nemotron)

* [NLLB](https://huggingface.co/docs/transformers/model_doc/nllb)

* [OLMo](https://huggingface.co/docs/transformers/model_doc/olmo#transformers.OlmoModel)

* [OLMoE](https://huggingface.co/docs/transformers/model_doc/olmoe#transformers.OlmoeModel)

* [OPT](https://huggingface.co/docs/transformers/model_doc/opt#transformers.OPTModel)

* [PaliGemma](https://huggingface.co/docs/transformers/model_doc/paligemma#transformers.PaliGemmaForConditionalGeneration)

* [Phi](https://huggingface.co/docs/transformers/model_doc/phi#transformers.PhiModel)

* [Phi3](https://huggingface.co/docs/transformers/model_doc/phi3#transformers.Phi3Model)

* [PhiMoE](https://huggingface.co/docs/transformers/model_doc/phimoe#transformers.PhimoeModel)

* [StableLm](https://huggingface.co/docs/transformers/model_doc/stablelm#transformers.StableLmModel)

* [Starcoder2](https://huggingface.co/docs/transformers/model_doc/starcoder2#transformers.Starcoder2Model)

* [Qwen2](https://huggingface.co/docs/transformers/model_doc/qwen2#transformers.Qwen2Model)

* [Qwen2Audio](https://huggingface.co/docs/transformers/model_doc/qwen2_audio#transformers.Qwen2AudioEncoder)

* [Qwen2MoE](https://huggingface.co/docs/transformers/model_doc/qwen2_moe#transformers.Qwen2MoeModel)

* [Qwen2VL](https://huggingface.co/docs/transformers/model_doc/qwen2_vl#transformers.Qwen2VLModel)

* [RAG](https://huggingface.co/docs/transformers/model_doc/rag#transformers.RagModel)

* [SpeechEncoderDecoder](https://huggingface.co/docs/transformers/model_doc/speech_encoder_decoder#transformers.SpeechEncoderDecoderModel)

* [VisionEncoderDecoder](https://huggingface.co/docs/transformers/model_doc/vision_encoder_decoder#transformers.VisionEncoderDecoderModel)

* [VisionTextDualEncoder](https://huggingface.co/docs/transformers/model_doc/vision_text_dual_encoder#transformers.VisionTextDualEncoderModel)

* [Whisper](https://huggingface.co/docs/transformers/model_doc/whisper#transformers.WhisperModel)

* [Wav2Vec2](https://huggingface.co/docs/transformers/model_doc/wav2vec2#transformers.Wav2Vec2Model)

* [Hubert](https://huggingface.co/docs/transformers/model_doc/hubert#transformers.HubertModel)

* [data2vec_audio](https://huggingface.co/docs/transformers/main/en/model_doc/data2vec#transformers.Data2VecAudioModel)

* [Sew](https://huggingface.co/docs/transformers/main/en/model_doc/sew#transformers.SEWModel)

* [SigLIP](https://huggingface.co/docs/transformers/model_doc/siglip)

* [UniSpeech](https://huggingface.co/docs/transformers/v4.39.3/en/model_doc/unispeech#transformers.UniSpeechModel)

* [unispeech_sat](https://huggingface.co/docs/transformers/v4.39.3/en/model_doc/unispeech-sat#transformers.UniSpeechSatModel)

You can request to add FlashAttention-2 support for another model by opening a GitHub Issue or Pull Request.

Before you begin, make sure you have FlashAttention-2 installed.

```bash

pip install flash-attn --no-build-isolation

```

We strongly suggest referring to the detailed [installation instructions](https://github.com/Dao-AILab/flash-attention?tab=readme-ov-file#installation-and-features) to learn more about supported hardware and data types!

FlashAttention-2 is also supported on AMD GPUs and current support is limited to **Instinct MI210**, **Instinct MI250** and **Instinct MI300**. We strongly suggest using this [Dockerfile](https://github.com/huggingface/optimum-amd/tree/main/docker/transformers-pytorch-amd-gpu-flash/Dockerfile) to use FlashAttention-2 on AMD GPUs.

To enable FlashAttention-2, pass the argument `attn_implementation="flash_attention_2"` to `from_pretrained()`:

```python

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, LlamaForCausalLM

model_id = "tiiuae/falcon-7b"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id,

torch_dtype=torch.bfloat16,

attn_implementation="flash_attention_2",

)

```

FlashAttention-2 can only be used when the model's dtype is `fp16` or `bf16`. Make sure to cast your model to the appropriate dtype and load them on a supported device before using FlashAttention-2.

You can also set `use_flash_attention_2=True` to enable FlashAttention-2 but it is deprecated in favor of `attn_implementation="flash_attention_2"`.

FlashAttention-2 can be combined with other optimization techniques like quantization to further speedup inference. For example, you can combine FlashAttention-2 with 8-bit or 4-bit quantization:

```py

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, LlamaForCausalLM

model_id = "tiiuae/falcon-7b"

tokenizer = AutoTokenizer.from_pretrained(model_id)

# load in 8bit

model = AutoModelForCausalLM.from_pretrained(

model_id,

load_in_8bit=True,

attn_implementation="flash_attention_2",

)

# load in 4bit

model = AutoModelForCausalLM.from_pretrained(

model_id,

load_in_4bit=True,

attn_implementation="flash_attention_2",

)

```

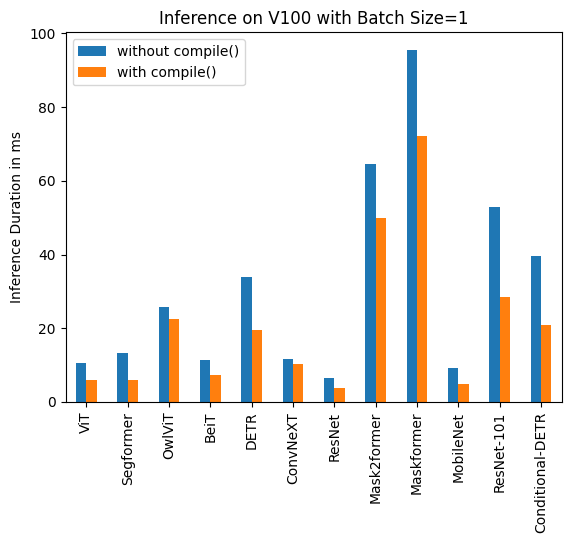

### Expected speedups

You can benefit from considerable speedups for inference, especially for inputs with long sequences. However, since FlashAttention-2 does not support computing attention scores with padding tokens, you must manually pad/unpad the attention scores for batched inference when the sequence contains padding tokens. This leads to a significant slowdown for batched generations with padding tokens.

To overcome this, you should use FlashAttention-2 without padding tokens in the sequence during training (by packing a dataset or [concatenating sequences](https://github.com/huggingface/transformers/blob/main/examples/pytorch/language-modeling/run_clm.py#L516) until reaching the maximum sequence length).

For a single forward pass on [tiiuae/falcon-7b](https://hf.co/tiiuae/falcon-7b) with a sequence length of 4096 and various batch sizes without padding tokens, the expected speedup is:

For a single forward pass on [meta-llama/Llama-7b-hf](https://hf.co/meta-llama/Llama-7b-hf) with a sequence length of 4096 and various batch sizes without padding tokens, the expected speedup is:

For sequences with padding tokens (generating with padding tokens), you need to unpad/pad the input sequences to correctly compute the attention scores. With a relatively small sequence length, a single forward pass creates overhead leading to a small speedup (in the example below, 30% of the input is filled with padding tokens):

But for larger sequence lengths, you can expect even more speedup benefits:

FlashAttention is more memory efficient, meaning you can train on much larger sequence lengths without running into out-of-memory issues. You can potentially reduce memory usage up to 20x for larger sequence lengths. Take a look at the [flash-attention](https://github.com/Dao-AILab/flash-attention) repository for more details.

## PyTorch scaled dot product attention

PyTorch's [`torch.nn.functional.scaled_dot_product_attention`](https://pytorch.org/docs/master/generated/torch.nn.functional.scaled_dot_product_attention.html) (SDPA) can also call FlashAttention and memory-efficient attention kernels under the hood. SDPA support is currently being added natively in Transformers and is used by default for `torch>=2.1.1` when an implementation is available. You may also set `attn_implementation="sdpa"` in `from_pretrained()` to explicitly request SDPA to be used.

For now, Transformers supports SDPA inference and training for the following architectures:

* [Albert](https://huggingface.co/docs/transformers/model_doc/albert#transformers.AlbertModel)

* [Audio Spectrogram Transformer](https://huggingface.co/docs/transformers/model_doc/audio-spectrogram-transformer#transformers.ASTModel)

* [Bart](https://huggingface.co/docs/transformers/model_doc/bart#transformers.BartModel)

* [Bert](https://huggingface.co/docs/transformers/model_doc/bert#transformers.BertModel)

* [BioGpt](https://huggingface.co/docs/transformers/model_doc/biogpt#transformers.BioGptModel)

* [CamemBERT](https://huggingface.co/docs/transformers/model_doc/camembert#transformers.CamembertModel)

* [Chameleon](https://huggingface.co/docs/transformers/model_doc/chameleon#transformers.Chameleon)

* [CLIP](https://huggingface.co/docs/transformers/model_doc/clip#transformers.CLIPModel)

* [GLM](https://huggingface.co/docs/transformers/model_doc/glm#transformers.GLMModel)

* [Cohere](https://huggingface.co/docs/transformers/model_doc/cohere#transformers.CohereModel)

* [data2vec_audio](https://huggingface.co/docs/transformers/main/en/model_doc/data2vec#transformers.Data2VecAudioModel)

* [Dbrx](https://huggingface.co/docs/transformers/model_doc/dbrx#transformers.DbrxModel)

* [DeiT](https://huggingface.co/docs/transformers/model_doc/deit#transformers.DeiTModel)

* [Dinov2](https://huggingface.co/docs/transformers/en/model_doc/dinov2)

* [DistilBert](https://huggingface.co/docs/transformers/model_doc/distilbert#transformers.DistilBertModel)

* [Dpr](https://huggingface.co/docs/transformers/model_doc/dpr#transformers.DprReader)

* [EncoderDecoder](https://huggingface.co/docs/transformers/model_doc/encoder_decoder#transformers.EncoderDecoderModel)

* [Falcon](https://huggingface.co/docs/transformers/model_doc/falcon#transformers.FalconModel)

* [Gemma](https://huggingface.co/docs/transformers/model_doc/gemma#transformers.GemmaModel)

* [Gemma2](https://huggingface.co/docs/transformers/model_doc/gemma2#transformers.Gemma2Model)

* [GPT2](https://huggingface.co/docs/transformers/model_doc/gpt2)

* [GPTBigCode](https://huggingface.co/docs/transformers/model_doc/gpt_bigcode#transformers.GPTBigCodeModel)

* [GPTNeoX](https://huggingface.co/docs/transformers/model_doc/gpt_neox#transformers.GPTNeoXModel)

* [Hubert](https://huggingface.co/docs/transformers/model_doc/hubert#transformers.HubertModel)

* [Idefics](https://huggingface.co/docs/transformers/model_doc/idefics#transformers.IdeficsModel)

* [Idefics2](https://huggingface.co/docs/transformers/model_doc/idefics2#transformers.Idefics2Model)

* [Idefics3](https://huggingface.co/docs/transformers/model_doc/idefics3#transformers.Idefics3Model)

* [Granite](https://huggingface.co/docs/transformers/model_doc/granite#transformers.GraniteModel)

* [GraniteMoe](https://huggingface.co/docs/transformers/model_doc/granitemoe#transformers.GraniteMoeModel)

* [JetMoe](https://huggingface.co/docs/transformers/model_doc/jetmoe#transformers.JetMoeModel)

* [Jamba](https://huggingface.co/docs/transformers/model_doc/jamba#transformers.JambaModel)

* [Llama](https://huggingface.co/docs/transformers/model_doc/llama#transformers.LlamaModel)

* [Llava](https://huggingface.co/docs/transformers/model_doc/llava)

* [Llava-NeXT](https://huggingface.co/docs/transformers/model_doc/llava_next)

* [Llava-NeXT-Video](https://huggingface.co/docs/transformers/model_doc/llava_next_video)

* [LLaVA-Onevision](https://huggingface.co/docs/transformers/model_doc/llava_onevision)

* [M2M100](https://huggingface.co/docs/transformers/model_doc/m2m_100#transformers.M2M100Model)

* [Mimi](https://huggingface.co/docs/transformers/model_doc/mimi)

* [Mistral](https://huggingface.co/docs/transformers/model_doc/mistral#transformers.MistralModel)

* [Mllama](https://huggingface.co/docs/transformers/model_doc/mllama#transformers.MllamaForConditionalGeneration)

* [Mixtral](https://huggingface.co/docs/transformers/model_doc/mixtral#transformers.MixtralModel)

* [Moshi](https://huggingface.co/docs/transformers/model_doc/moshi#transformers.MoshiModel)

* [Musicgen](https://huggingface.co/docs/transformers/model_doc/musicgen#transformers.MusicgenModel)

* [MusicGen Melody](https://huggingface.co/docs/transformers/model_doc/musicgen_melody#transformers.MusicgenMelodyModel)

* [NLLB](https://huggingface.co/docs/transformers/model_doc/nllb)

* [OLMo](https://huggingface.co/docs/transformers/model_doc/olmo#transformers.OlmoModel)

* [OLMoE](https://huggingface.co/docs/transformers/model_doc/olmoe#transformers.OlmoeModel)

* [OPT](https://huggingface.co/docs/transformers/en/model_doc/opt)

* [PaliGemma](https://huggingface.co/docs/transformers/model_doc/paligemma#transformers.PaliGemmaForConditionalGeneration)

* [Phi](https://huggingface.co/docs/transformers/model_doc/phi#transformers.PhiModel)

* [Phi3](https://huggingface.co/docs/transformers/model_doc/phi3#transformers.Phi3Model)

* [PhiMoE](https://huggingface.co/docs/transformers/model_doc/phimoe#transformers.PhimoeModel)

* [Idefics](https://huggingface.co/docs/transformers/model_doc/idefics#transformers.IdeficsModel)

* [Whisper](https://huggingface.co/docs/transformers/model_doc/whisper#transformers.WhisperModel)

* [mBart](https://huggingface.co/docs/transformers/model_doc/mbart#transformers.MBartModel)

* [Mistral](https://huggingface.co/docs/transformers/model_doc/mistral#transformers.MistralModel)

* [Mixtral](https://huggingface.co/docs/transformers/model_doc/mixtral#transformers.MixtralModel)

* [StableLm](https://huggingface.co/docs/transformers/model_doc/stablelm#transformers.StableLmModel)

* [Starcoder2](https://huggingface.co/docs/transformers/model_doc/starcoder2#transformers.Starcoder2Model)

* [Qwen2](https://huggingface.co/docs/transformers/model_doc/qwen2#transformers.Qwen2Model)

* [Qwen2Audio](https://huggingface.co/docs/transformers/model_doc/qwen2_audio#transformers.Qwen2AudioEncoder)

* [Qwen2MoE](https://huggingface.co/docs/transformers/model_doc/qwen2_moe#transformers.Qwen2MoeModel)

* [RoBERTa](https://huggingface.co/docs/transformers/model_doc/roberta#transformers.RobertaModel)

* [Sew](https://huggingface.co/docs/transformers/main/en/model_doc/sew#transformers.SEWModel)

* [SigLIP](https://huggingface.co/docs/transformers/model_doc/siglip)

* [StableLm](https://huggingface.co/docs/transformers/model_doc/stablelm#transformers.StableLmModel)

* [Starcoder2](https://huggingface.co/docs/transformers/model_doc/starcoder2#transformers.Starcoder2Model)

* [UniSpeech](https://huggingface.co/docs/transformers/v4.39.3/en/model_doc/unispeech#transformers.UniSpeechModel)

* [unispeech_sat](https://huggingface.co/docs/transformers/v4.39.3/en/model_doc/unispeech-sat#transformers.UniSpeechSatModel)

* [RoBERTa](https://huggingface.co/docs/transformers/model_doc/roberta#transformers.RobertaModel)

* [Qwen2VL](https://huggingface.co/docs/transformers/model_doc/qwen2_vl#transformers.Qwen2VLModel)

* [Musicgen](https://huggingface.co/docs/transformers/model_doc/musicgen#transformers.MusicgenModel)

* [MusicGen Melody](https://huggingface.co/docs/transformers/model_doc/musicgen_melody#transformers.MusicgenMelodyModel)

* [Nemotron](https://huggingface.co/docs/transformers/model_doc/nemotron)

* [SpeechEncoderDecoder](https://huggingface.co/docs/transformers/model_doc/speech_encoder_decoder#transformers.SpeechEncoderDecoderModel)

* [VideoLlava](https://huggingface.co/docs/transformers/model_doc/video_llava)

* [VipLlava](https://huggingface.co/docs/transformers/model_doc/vipllava)

* [VisionEncoderDecoder](https://huggingface.co/docs/transformers/model_doc/vision_encoder_decoder#transformers.VisionEncoderDecoderModel)

* [ViT](https://huggingface.co/docs/transformers/model_doc/vit#transformers.ViTModel)

* [ViTHybrid](https://huggingface.co/docs/transformers/model_doc/vit_hybrid#transformers.ViTHybridModel)

* [ViTMAE](https://huggingface.co/docs/transformers/model_doc/vit_mae#transformers.ViTMAEModel)

* [ViTMSN](https://huggingface.co/docs/transformers/model_doc/vit_msn#transformers.ViTMSNModel)

* [VisionTextDualEncoder](https://huggingface.co/docs/transformers/model_doc/vision_text_dual_encoder#transformers.VisionTextDualEncoderModel)

* [VideoMAE](https://huggingface.co/docs/transformers/model_doc/videomae#transformers.VideoMAEModell)

* [ViViT](https://huggingface.co/docs/transformers/model_doc/vivit#transformers.VivitModel)

* [wav2vec2](https://huggingface.co/docs/transformers/model_doc/wav2vec2#transformers.Wav2Vec2Model)

* [Whisper](https://huggingface.co/docs/transformers/model_doc/whisper#transformers.WhisperModel)

* [XLM-RoBERTa](https://huggingface.co/docs/transformers/model_doc/xlm-roberta#transformers.XLMRobertaModel)

* [XLM-RoBERTa-XL](https://huggingface.co/docs/transformers/model_doc/xlm-roberta-xl#transformers.XLMRobertaXLModel)

* [YOLOS](https://huggingface.co/docs/transformers/model_doc/yolos#transformers.YolosModel)

FlashAttention can only be used for models with the `fp16` or `bf16` torch type, so make sure to cast your model to the appropriate type first. The memory-efficient attention backend is able to handle `fp32` models.

SDPA does not support certain sets of attention parameters, such as `head_mask` and `output_attentions=True`.

In that case, you should see a warning message and we will fall back to the (slower) eager implementation.

By default, SDPA selects the most performant kernel available but you can check whether a backend is available in a given setting (hardware, problem size) with [`torch.backends.cuda.sdp_kernel`](https://pytorch.org/docs/master/backends.html#torch.backends.cuda.sdp_kernel) as a context manager:

```diff

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("facebook/opt-350m")

model = AutoModelForCausalLM.from_pretrained("facebook/opt-350m", torch_dtype=torch.float16).to("cuda")

input_text = "Hello my dog is cute and"

inputs = tokenizer(input_text, return_tensors="pt").to("cuda")

+ with torch.backends.cuda.sdp_kernel(enable_flash=True, enable_math=False, enable_mem_efficient=False):

outputs = model.generate(**inputs)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

If you see a bug with the traceback below, try using the nightly version of PyTorch which may have broader coverage for FlashAttention:

```bash

RuntimeError: No available kernel. Aborting execution.

# install PyTorch nightly

pip3 install -U --pre torch torchvision torchaudio --index-url https://download.pytorch.org/whl/nightly/cu118

```

## BetterTransformer

Some BetterTransformer features are being upstreamed to Transformers with default support for native `torch.nn.scaled_dot_product_attention`. BetterTransformer still has a wider coverage than the Transformers SDPA integration, but you can expect more and more architectures to natively support SDPA in Transformers.

Check out our benchmarks with BetterTransformer and scaled dot product attention in the [Out of the box acceleration and memory savings of 🤗 decoder models with PyTorch 2.0](https://pytorch.org/blog/out-of-the-box-acceleration/) and learn more about the fastpath execution in the [BetterTransformer](https://medium.com/pytorch/bettertransformer-out-of-the-box-performance-for-huggingface-transformers-3fbe27d50ab2) blog post.

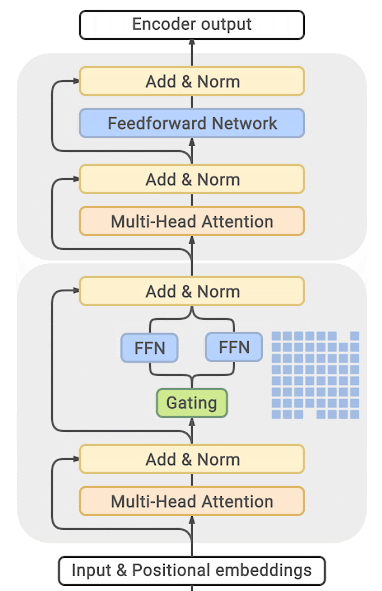

BetterTransformer accelerates inference with its fastpath (native PyTorch specialized implementation of Transformer functions) execution. The two optimizations in the fastpath execution are:

1. fusion, which combines multiple sequential operations into a single "kernel" to reduce the number of computation steps

2. skipping the inherent sparsity of padding tokens to avoid unnecessary computation with nested tensors

BetterTransformer also converts all attention operations to use the more memory-efficient [scaled dot product attention (SDPA)](https://pytorch.org/docs/master/generated/torch.nn.functional.scaled_dot_product_attention), and it calls optimized kernels like [FlashAttention](https://huggingface.co/papers/2205.14135) under the hood.

Before you start, make sure you have 🤗 Optimum [installed](https://huggingface.co/docs/optimum/installation).

Then you can enable BetterTransformer with the `PreTrainedModel.to_bettertransformer()` method:

```python

model = model.to_bettertransformer()

```

You can return the original Transformers model with the `reverse_bettertransformer()` method. You should use this before saving your model to use the canonical Transformers modeling:

```py

model = model.reverse_bettertransformer()

model.save_pretrained("saved_model")

```

## bitsandbytes

bitsandbytes is a quantization library that includes support for 4-bit and 8-bit quantization. Quantization reduces your model size compared to its native full precision version, making it easier to fit large models onto GPUs with limited memory.

Make sure you have bitsandbytes and 🤗 Accelerate installed:

```bash

# these versions support 8-bit and 4-bit

pip install bitsandbytes>=0.39.0 accelerate>=0.20.0

# install Transformers

pip install transformers

```

### 4-bit

To load a model in 4-bit for inference, use the `load_in_4bit` parameter. The `device_map` parameter is optional, but we recommend setting it to `"auto"` to allow 🤗 Accelerate to automatically and efficiently allocate the model given the available resources in the environment.

```py

from transformers import AutoModelForCausalLM

model_name = "bigscience/bloom-2b5"

model_4bit = AutoModelForCausalLM.from_pretrained(model_name, device_map="auto", load_in_4bit=True)

```

To load a model in 4-bit for inference with multiple GPUs, you can control how much GPU RAM you want to allocate to each GPU. For example, to distribute 600MB of memory to the first GPU and 1GB of memory to the second GPU:

```py

max_memory_mapping = {0: "600MB", 1: "1GB"}

model_name = "bigscience/bloom-3b"

model_4bit = AutoModelForCausalLM.from_pretrained(

model_name, device_map="auto", load_in_4bit=True, max_memory=max_memory_mapping

)

```

### 8-bit

If you're curious and interested in learning more about the concepts underlying 8-bit quantization, read the [Gentle Introduction to 8-bit Matrix Multiplication for transformers at scale using Hugging Face Transformers, Accelerate and bitsandbytes](https://huggingface.co/blog/hf-bitsandbytes-integration) blog post.

To load a model in 8-bit for inference, use the `load_in_8bit` parameter. The `device_map` parameter is optional, but we recommend setting it to `"auto"` to allow 🤗 Accelerate to automatically and efficiently allocate the model given the available resources in the environment:

```py

from transformers import AutoModelForCausalLM, BitsAndBytesConfig

model_name = "bigscience/bloom-2b5"

model_8bit = AutoModelForCausalLM.from_pretrained(model_name, quantization_config=BitsAndBytesConfig(load_in_8bit=True))

```

If you're loading a model in 8-bit for text generation, you should use the `generate()` method instead of the `Pipeline` function which is not optimized for 8-bit models and will be slower. Some sampling strategies, like nucleus sampling, are also not supported by the `Pipeline` for 8-bit models. You should also place all inputs on the same device as the model:

```py

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

model_name = "bigscience/bloom-2b5"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model_8bit = AutoModelForCausalLM.from_pretrained(model_name, quantization_config=BitsAndBytesConfig(load_in_8bit=True))

prompt = "Hello, my llama is cute"

inputs = tokenizer(prompt, return_tensors="pt").to("cuda")

generated_ids = model.generate(**inputs)

outputs = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)

```

To load a model in 4-bit for inference with multiple GPUs, you can control how much GPU RAM you want to allocate to each GPU. For example, to distribute 1GB of memory to the first GPU and 2GB of memory to the second GPU:

```py

max_memory_mapping = {0: "1GB", 1: "2GB"}

model_name = "bigscience/bloom-3b"

model_8bit = AutoModelForCausalLM.from_pretrained(

model_name, device_map="auto", load_in_8bit=True, max_memory=max_memory_mapping

)

```

Feel free to try running a 11 billion parameter [T5 model](https://colab.research.google.com/drive/1YORPWx4okIHXnjW7MSAidXN29mPVNT7F?usp=sharing) or the 3 billion parameter [BLOOM model](https://colab.research.google.com/drive/1qOjXfQIAULfKvZqwCen8-MoWKGdSatZ4?usp=sharing) for inference on Google Colab's free tier GPUs!

## 🤗 Optimum

Learn more details about using ORT with 🤗 Optimum in the [Accelerated inference on NVIDIA GPUs](https://huggingface.co/docs/optimum/onnxruntime/usage_guides/gpu#accelerated-inference-on-nvidia-gpus) and [Accelerated inference on AMD GPUs](https://huggingface.co/docs/optimum/onnxruntime/usage_guides/amdgpu#accelerated-inference-on-amd-gpus) guides. This section only provides a brief and simple example.

ONNX Runtime (ORT) is a model accelerator that supports accelerated inference on Nvidia GPUs, and AMD GPUs that use [ROCm](https://www.amd.com/en/products/software/rocm.html) stack. ORT uses optimization techniques like fusing common operations into a single node and constant folding to reduce the number of computations performed and speedup inference. ORT also places the most computationally intensive operations on the GPU and the rest on the CPU to intelligently distribute the workload between the two devices.

ORT is supported by 🤗 Optimum which can be used in 🤗 Transformers. You'll need to use an [ORTModel](https://huggingface.co/docs/optimum/main/en/onnxruntime/package_reference/modeling_ort#optimum.onnxruntime.ORTModel) for the task you're solving, and specify the `provider` parameter which can be set to either [`CUDAExecutionProvider`](https://huggingface.co/docs/optimum/onnxruntime/usage_guides/gpu#cudaexecutionprovider), [`ROCMExecutionProvider`](https://huggingface.co/docs/optimum/onnxruntime/usage_guides/amdgpu) or [`TensorrtExecutionProvider`](https://huggingface.co/docs/optimum/onnxruntime/usage_guides/gpu#tensorrtexecutionprovider). If you want to load a model that was not yet exported to ONNX, you can set `export=True` to convert your model on-the-fly to the ONNX format:

```py

from optimum.onnxruntime import ORTModelForSequenceClassification

ort_model = ORTModelForSequenceClassification.from_pretrained(

"distilbert/distilbert-base-uncased-finetuned-sst-2-english",

export=True,

provider="CUDAExecutionProvider",

)

```

Now you're free to use the model for inference:

```py

from optimum.pipelines import pipeline

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("distilbert/distilbert-base-uncased-finetuned-sst-2-english")

pipeline = pipeline(task="text-classification", model=ort_model, tokenizer=tokenizer, device="cuda:0")

result = pipeline("Both the music and visual were astounding, not to mention the actors performance.")

```

## Combine optimizations

It is often possible to combine several of the optimization techniques described above to get the best inference performance possible for your model. For example, you can load a model in 4-bit, and then enable BetterTransformer with FlashAttention:

```py

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfig

# load model in 4-bit

quantization_config = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_compute_dtype=torch.float16

)

tokenizer = AutoTokenizer.from_pretrained("facebook/opt-350m")

model = AutoModelForCausalLM.from_pretrained("facebook/opt-350m", quantization_config=quantization_config)

# enable BetterTransformer

model = model.to_bettertransformer()

input_text = "Hello my dog is cute and"

inputs = tokenizer(input_text, return_tensors="pt").to("cuda")

# enable FlashAttention

with torch.backends.cuda.sdp_kernel(enable_flash=True, enable_math=False, enable_mem_efficient=False):

outputs = model.generate(**inputs)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

```

# Distributed training with 🤗 Accelerate

As models get bigger, parallelism has emerged as a strategy for training larger models on limited hardware and accelerating training speed by several orders of magnitude. At Hugging Face, we created the [🤗 Accelerate](https://huggingface.co/docs/accelerate) library to help users easily train a 🤗 Transformers model on any type of distributed setup, whether it is multiple GPU's on one machine or multiple GPU's across several machines. In this tutorial, learn how to customize your native PyTorch training loop to enable training in a distributed environment.

## Setup

Get started by installing 🤗 Accelerate:

```bash

pip install accelerate

```

Then import and create an [Accelerator](https://huggingface.co/docs/accelerate/main/en/package_reference/accelerator#accelerate.Accelerator) object. The [Accelerator](https://huggingface.co/docs/accelerate/main/en/package_reference/accelerator#accelerate.Accelerator) will automatically detect your type of distributed setup and initialize all the necessary components for training. You don't need to explicitly place your model on a device.

```py

>>> from accelerate import Accelerator

>>> accelerator = Accelerator()

```

## Prepare to accelerate

The next step is to pass all the relevant training objects to the [prepare](https://huggingface.co/docs/accelerate/main/en/package_reference/accelerator#accelerate.Accelerator.prepare) method. This includes your training and evaluation DataLoaders, a model and an optimizer:

```py

>>> train_dataloader, eval_dataloader, model, optimizer = accelerator.prepare(

... train_dataloader, eval_dataloader, model, optimizer

... )

```

## Backward

The last addition is to replace the typical `loss.backward()` in your training loop with 🤗 Accelerate's [backward](https://huggingface.co/docs/accelerate/main/en/package_reference/accelerator#accelerate.Accelerator.backward) method:

```py

>>> for epoch in range(num_epochs):

... for batch in train_dataloader:

... outputs = model(**batch)

... loss = outputs.loss

... accelerator.backward(loss)

... optimizer.step()

... lr_scheduler.step()

... optimizer.zero_grad()

... progress_bar.update(1)

```

As you can see in the following code, you only need to add four additional lines of code to your training loop to enable distributed training!

```diff

+ from accelerate import Accelerator

from transformers import AdamW, AutoModelForSequenceClassification, get_scheduler

+ accelerator = Accelerator()

model = AutoModelForSequenceClassification.from_pretrained(checkpoint, num_labels=2)

optimizer = AdamW(model.parameters(), lr=3e-5)

- device = torch.device("cuda") if torch.cuda.is_available() else torch.device("cpu")

- model.to(device)

+ train_dataloader, eval_dataloader, model, optimizer = accelerator.prepare(

+ train_dataloader, eval_dataloader, model, optimizer

+ )

num_epochs = 3

num_training_steps = num_epochs * len(train_dataloader)

lr_scheduler = get_scheduler(

"linear",

optimizer=optimizer,

num_warmup_steps=0,

num_training_steps=num_training_steps

)

progress_bar = tqdm(range(num_training_steps))

model.train()

for epoch in range(num_epochs):

for batch in train_dataloader:

- batch = {k: v.to(device) for k, v in batch.items()}

outputs = model(**batch)

loss = outputs.loss

- loss.backward()

+ accelerator.backward(loss)

optimizer.step()

lr_scheduler.step()

optimizer.zero_grad()

progress_bar.update(1)

```

## Train

Once you've added the relevant lines of code, launch your training in a script or a notebook like Colaboratory.

### Train with a script

If you are running your training from a script, run the following command to create and save a configuration file:

```bash

accelerate config

```

Then launch your training with:

```bash

accelerate launch train.py

```

### Train with a notebook

🤗 Accelerate can also run in a notebook if you're planning on using Colaboratory's TPUs. Wrap all the code responsible for training in a function, and pass it to [notebook_launcher](https://huggingface.co/docs/accelerate/main/en/package_reference/launchers#accelerate.notebook_launcher):

```py

>>> from accelerate import notebook_launcher

>>> notebook_launcher(training_function)

```

For more information about 🤗 Accelerate and its rich features, refer to the [documentation](https://huggingface.co/docs/accelerate).

# Best Practices for Generation with Cache

Efficient caching is crucial for optimizing the performance of models in various generative tasks,

including text generation, translation, summarization and other transformer-based applications.

Effective caching helps reduce computation time and improve response rates, especially in real-time or resource-intensive applications.

Transformers support various caching methods, leveraging "Cache" classes to abstract and manage the caching logic.

This document outlines best practices for using these classes to maximize performance and efficiency.

Check out all the available `Cache` classes in the [API documentation](./internal/generation_utils).

## What is Cache and why we should care?

Imagine you’re having a conversation with someone, and instead of remembering what was said previously, you have to start from scratch every time you respond. This would be slow and inefficient, right? In the world of Transformer models, a similar concept applies, and that's where Caching keys and values come into play. From now on, I'll refer to the concept as KV Cache.

KV cache is needed to optimize the generation in autoregressive models, where the model predicts text token by token. This process can be slow since the model can generate only one token at a time, and each new prediction is dependent on the previous context. That means, to predict token number 1000 in the generation, you need information from the previous 999 tokens, which comes in the form of some matrix multiplications across the representations of those tokens. But to predict token number 1001, you also need the same information from the first 999 tokens, plus additional information from token number 1000. That is where key-value cache is used to optimize the sequential generation process by storing previous calculations to reuse in subsequent tokens, so they don't need to be computed again.

More concretely, key-value cache acts as a memory bank for these generative models, where the model stores key-value pairs derived from self-attention layers for previously processed tokens. By storing this information, the model can avoid redundant computations and instead retrieve keys and values of previous tokens from the cache. Note that caching can be used only in inference and should be disabled when training, otherwise it might cause unexpected errors.

For the Curious Minds Who Like to Dive Deep

### Under the Hood: How Cache Object Works in Attention Mechanism

When utilizing a cache object in the input, the Attention module performs several critical steps to integrate past and present information seamlessly.

The Attention module concatenates the current key-values with the past key-values stored in the cache. This results in attention weights of shape `(new_tokens_length, past_kv_length + new_tokens_length)`. Essentially, the past and current key-values are combined to compute attention scores, ensuring that the model considers both previous context and new input. The concatenated key-values are used to compute the attention scores resulting in attention weights of shape `(new_tokens_length, past_kv_length + new_tokens_length)`.

Therefore, when iteratively calling `forward()` instead of the `generate()` method, it’s crucial to ensure that the attention mask shape matches the combined length of past and current key-values. The attention mask should have the shape `(batch_size, past_kv_length + new_tokens_length)`. This is usually handled internally when you call `generate()` method. If you want to implement your own generation loop with Cache classes, take this into consideration and prepare the attention mask to hold values to current and past tokens.

One important concept you need to know when writing your own generation loop, is `cache_position`. In case you want to reuse an already filled Cache object by calling `forward()`, you have to pass in a valid `cache_position` which will indicate the positions of inputs in the sequence. Note that `cache_position` is not affected by padding, and always adds one more position for each token. For example, if key/value cache contains 10 tokens (no matter how many of it is a pad token), the cache position for the next token should be `torch.tensor([10])`.

See an example below for how to implement your own generation loop.

```python

>>> import torch

>>> from transformers import AutoTokenizer, AutoModelForCausalLM, DynamicCache

>>> model_id = "meta-llama/Llama-2-7b-chat-hf"

>>> model = AutoModelForCausalLM.from_pretrained(model_id, torch_dtype=torch.bfloat16, device_map="cuda:0")

>>> tokenizer = AutoTokenizer.from_pretrained(model_id)

>>> past_key_values = DynamicCache()

>>> messages = [{"role": "user", "content": "Hello, what's your name."}]

>>> inputs = tokenizer.apply_chat_template(messages, add_generation_prompt=True, return_tensors="pt", return_dict=True).to("cuda:0")

>>> generated_ids = inputs.input_ids

>>> cache_position = torch.arange(inputs.input_ids.shape[1], dtype=torch.int64, device="cuda:0")

>>> max_new_tokens = 10

>>> for _ in range(max_new_tokens):

... outputs = model(**inputs, cache_position=cache_position, past_key_values=past_key_values, use_cache=True)

... # Greedily sample one next token

... next_token_ids = outputs.logits[:, -1:].argmax(-1)

... generated_ids = torch.cat([generated_ids, next_token_ids], dim=-1)

...

... # Prepare inputs for the next generation step by leaaving unprocessed tokens, in our case we have only one new token

... # and expanding attn mask for the new token, as explained above

... attention_mask = inputs["attention_mask"]

... attention_mask = torch.cat([attention_mask, attention_mask.new_ones((attention_mask.shape[0], 1))], dim=-1)

... inputs = {"input_ids": next_token_ids, "attention_mask": attention_mask}

... cache_position = cache_position[-1:] + 1 # add one more position for the next token

>>> print(tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0])

"[INST] Hello, what's your name. [/INST] Hello! My name is LLaMA,"

```

## Generate with Cache

In 🤗 Transformers, we support various Cache types to optimize the performance across different models and tasks. By default, all models generate with caching,

with the `~DynamicCache` class being the default cache for most models. It allows us to dynamically grow cache size, by saving more and more keys and values as we generate. If for some reason you don't want to use caches, you can pass `use_cache=False` into the `generate()` method.

Refer to the table below to see the difference between cache types and choose the one that suits best for your use-case. Models for which initialization is recommended should be initialized before calling the model and passed to model as a kwarg. In all other cases you can simply define desired `cache_implementation` and we take care of the rest for you.

| Cache Type | Memory Efficient | Supports torch.compile() | Initialization Recommended | Latency | Long Context Generation |

|------------------------|------------------|--------------------------|----------------------------|---------|-------------------------|

| Dynamic Cache | No | No | No | Mid | No |

| Static Cache | No | Yes | Yes | High | No |

| Offloaded Cache | Yes | No | No | Low | Yes |

| Offloaded Static Cache | No | Yes | Yes | High | Yes |

| Quantized Cache | Yes | No | No | Low | Yes |

| Sliding Window Cache | No | Yes | Yes | High | No |

| Sink Cache | Yes | No | Yes | Mid | Yes |

These cache classes can be set with a `cache_implementation` argument when generating. To learn about the available options for the cache_implementation flag, please refer to the [API Documentation](./main_classes/text_generation#transformers.GenerationConfig). Now, let's explore each cache type in detail and see how to use them. Note that the below examples are for decoder-only Tranformer-based models. We also support ["Model-Specific Cache"] classes for models such as Mamba or Jamba, keep reading for more details.

### Quantized Cache